When off-the-shelf models aren’t good enough for a specific task, fine-tune one that is. A task-specific model is typically smaller, faster, and cheaper to run than the general-purpose model it replaces, while being more accurate for your workload. This guide uses the Customer Support Chatbot demo project to walk through the full loop: launching a training job, monitoring progress, deploying the result, and evaluating the trained model. No data of your own is needed — the demo project comes with everything pre-loaded.Documentation Index

Fetch the complete documentation index at: https://docs.inference.net/llms.txt

Use this file to discover all available pages before exploring further.

Start the demo project

If you already created the demo project during Run Your First Eval, you’re all set — open it from the dashboard and skip to the next section. Otherwise:- From the dashboard, navigate to the Learn page (or the Create a Project page).

- Find Customer Support Chatbot and click Start with demo project.

| Artifact | Name | Role in training |

|---|---|---|

| Training dataset | customer-support-train | The data the model learns from |

| Eval dataset | customer-support-eval | A held-out set used to measure learning progress — must have zero overlap with training data |

| Rubric | Customer support rubric | Defines the quality criteria the LLM judge scores against during and after training |

Train a model

Create a new training job

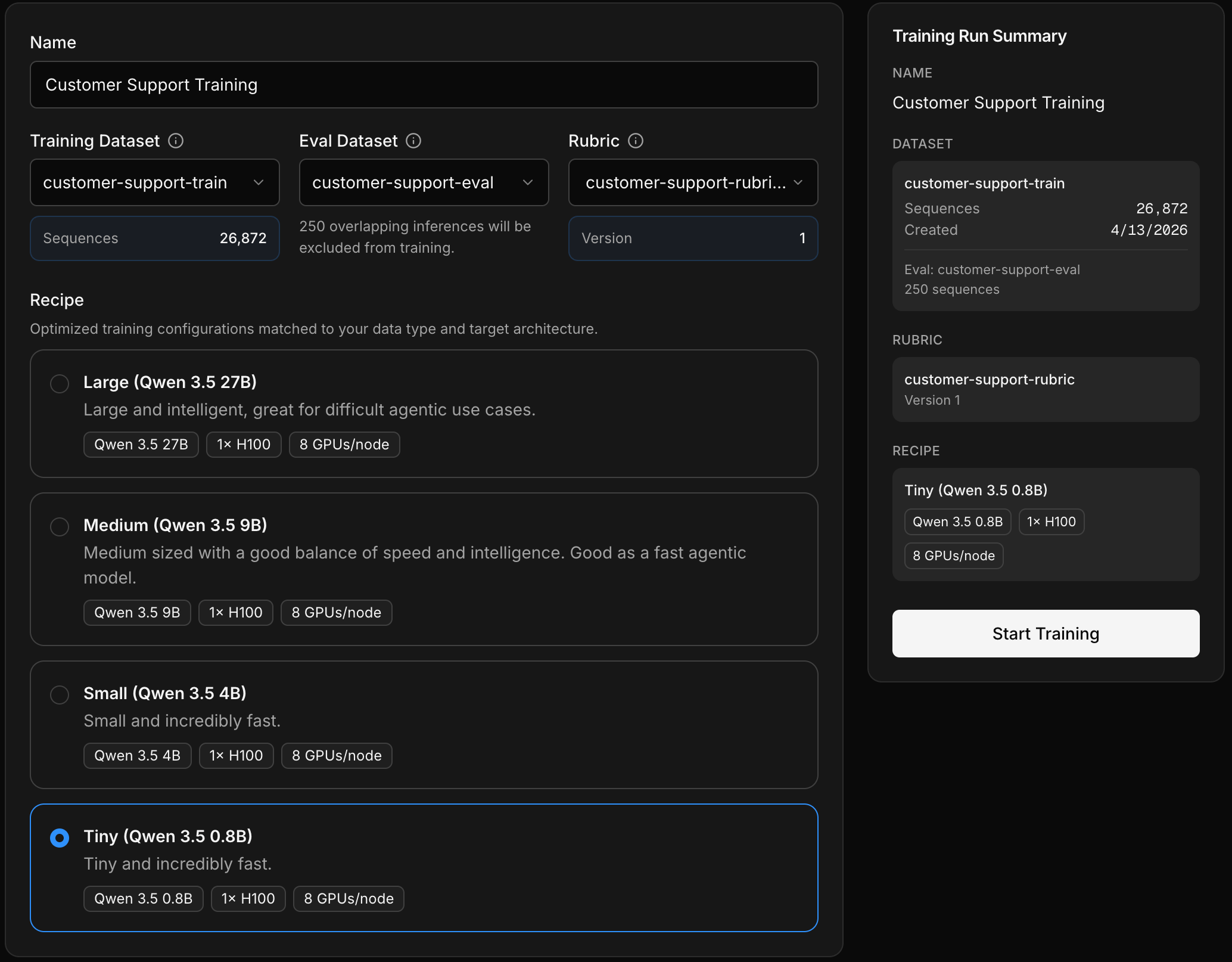

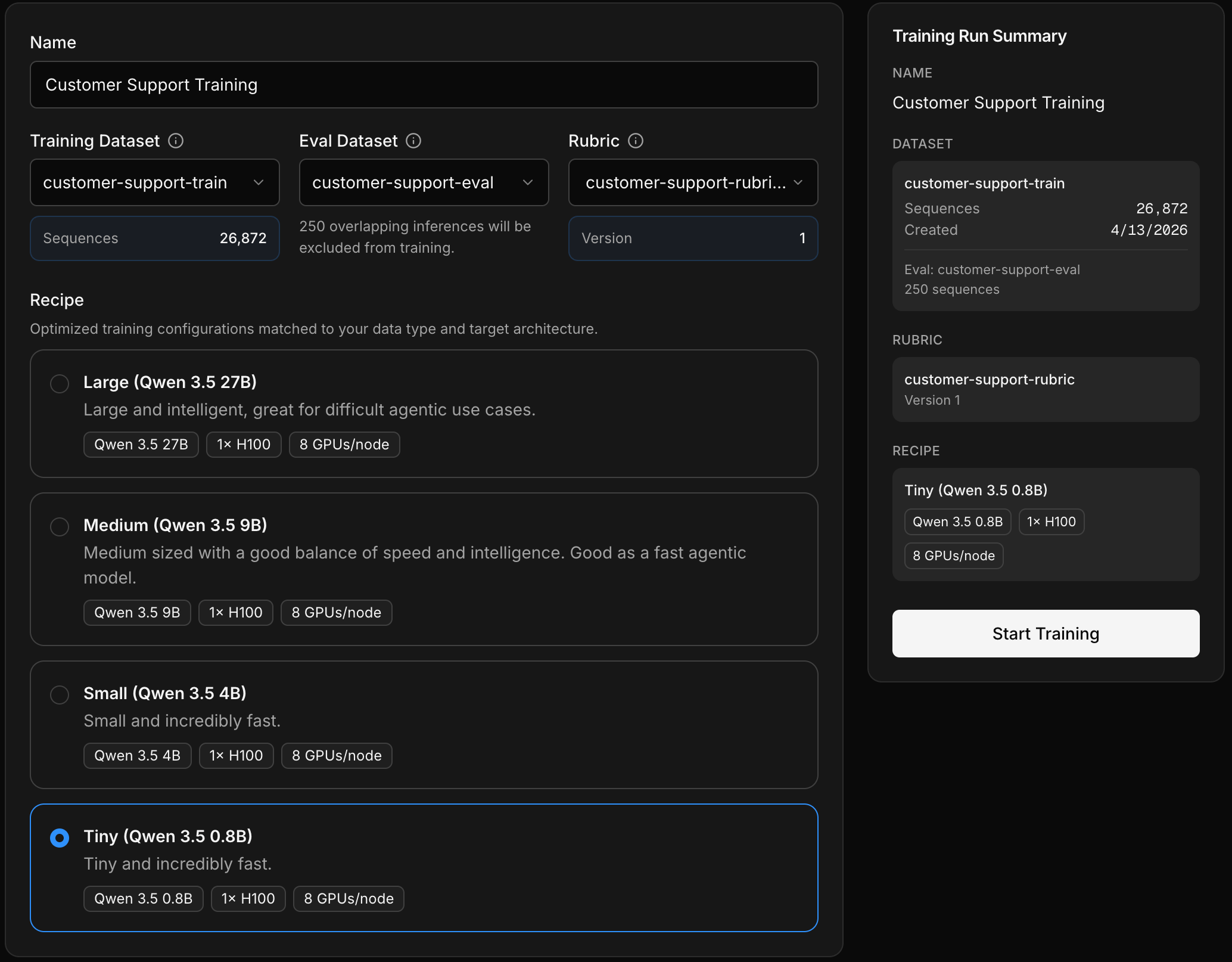

Open the Training tab in your project and click New Training Job.Select the three inputs from your demo project:

- Training dataset —

customer-support-train - Eval dataset —

customer-support-eval - Rubric — the customer support rubric

Choose a recipe

Next, pick a recipe — a pre-configured training setup with a base model, optimized parameters, and compute config. For this demo, the smallest recipe works well and will finish the fastest.

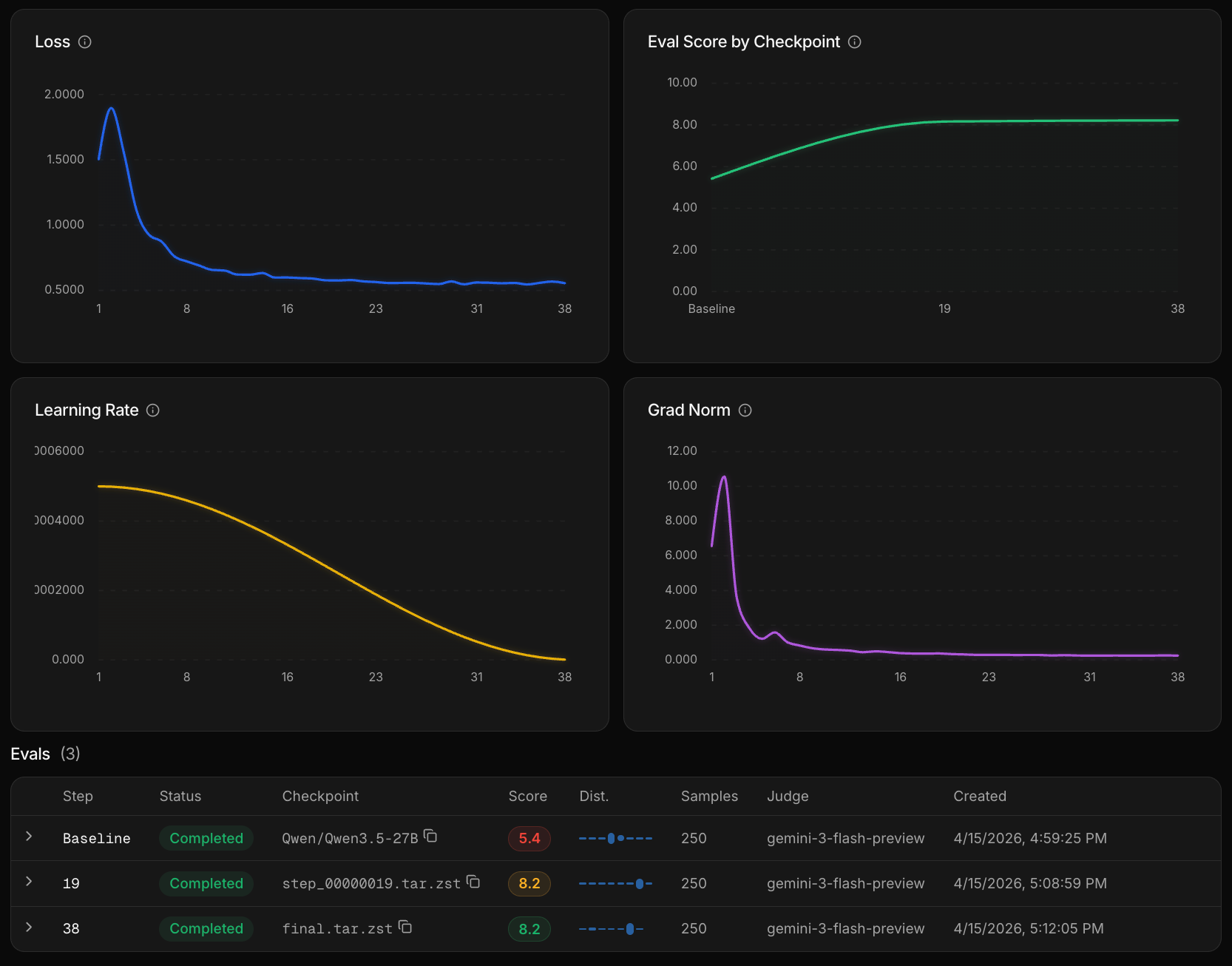

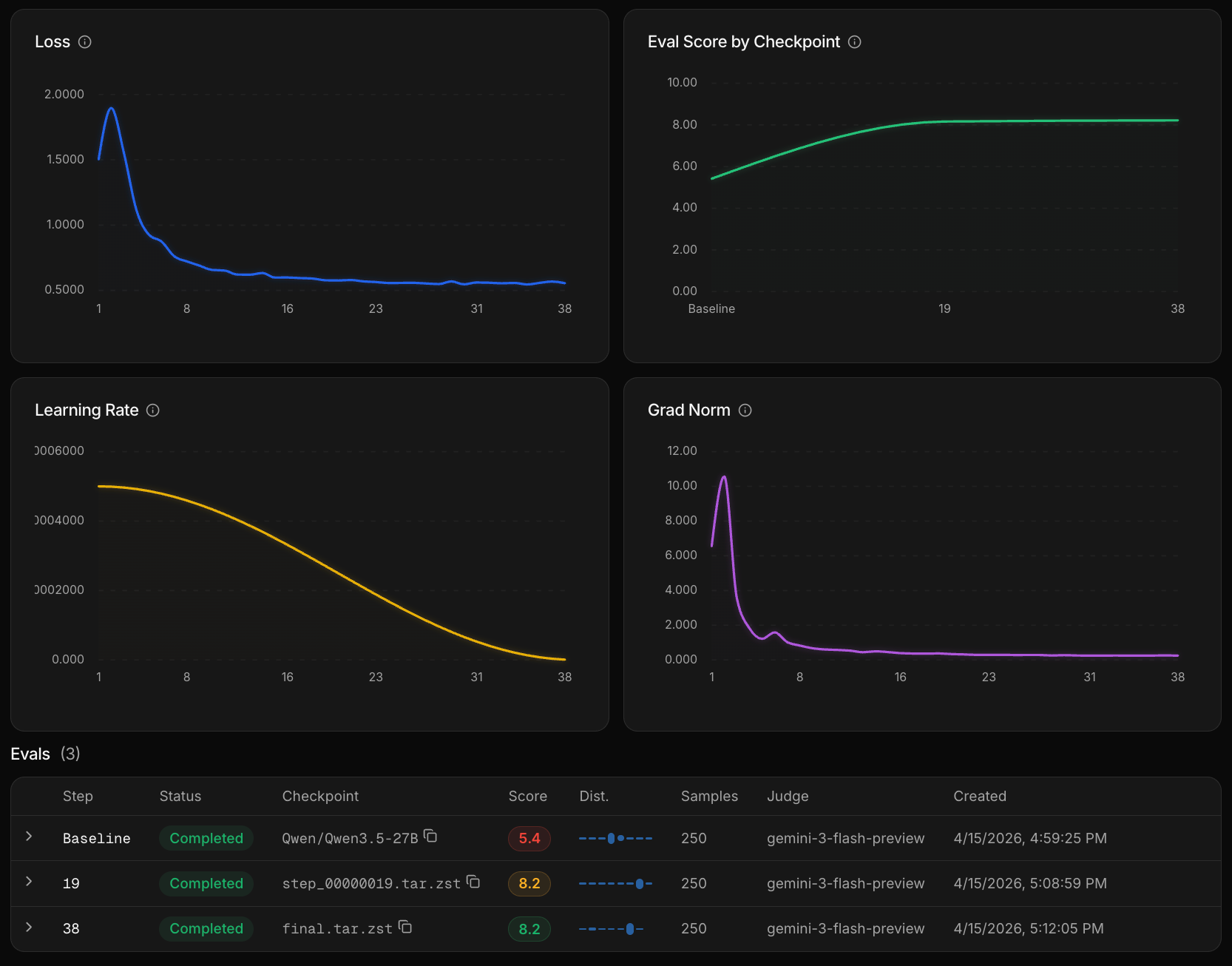

Monitor training progress

During training, the platform periodically runs the

customer-support-eval dataset through your model-in-progress and scores the outputs using the rubric. You can watch these mid-training eval scores update in real time.- Scores improving — training is on track and continues

- Scores degrading — training stops early to prevent overfitting

Deploy the trained model

Deploy

When training completes, your model is automatically registered and ready to deploy. Navigate to Deployments, name your deployment, and click Deploy. The GPU spins up in a few minutes depending on model size.

Call your model

Once deployed, you call it the same way you’d call any model through the Inference API — same base URL, same headers — just swap the Replace

model parameter to your trained model’s identifier.your-org/your-trained-model with the model identifier shown on your deployment page. See Call Your Deployment for the full setup guide.Evaluate the trained model

Once a model has finished training, you can run evals against it alongside any other model — no deployment required. This lets you iterate on your data and retrain for better results before you deploy and take it to production.Run an eval with your trained model

Go to the Evals tab and create a new eval. Select the

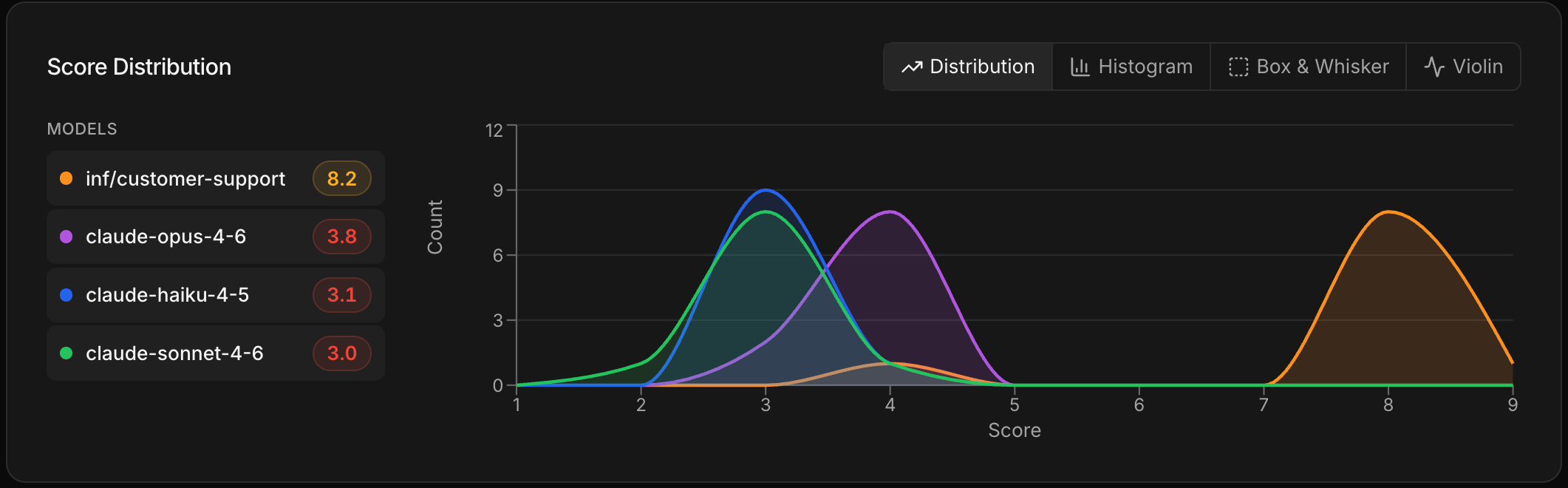

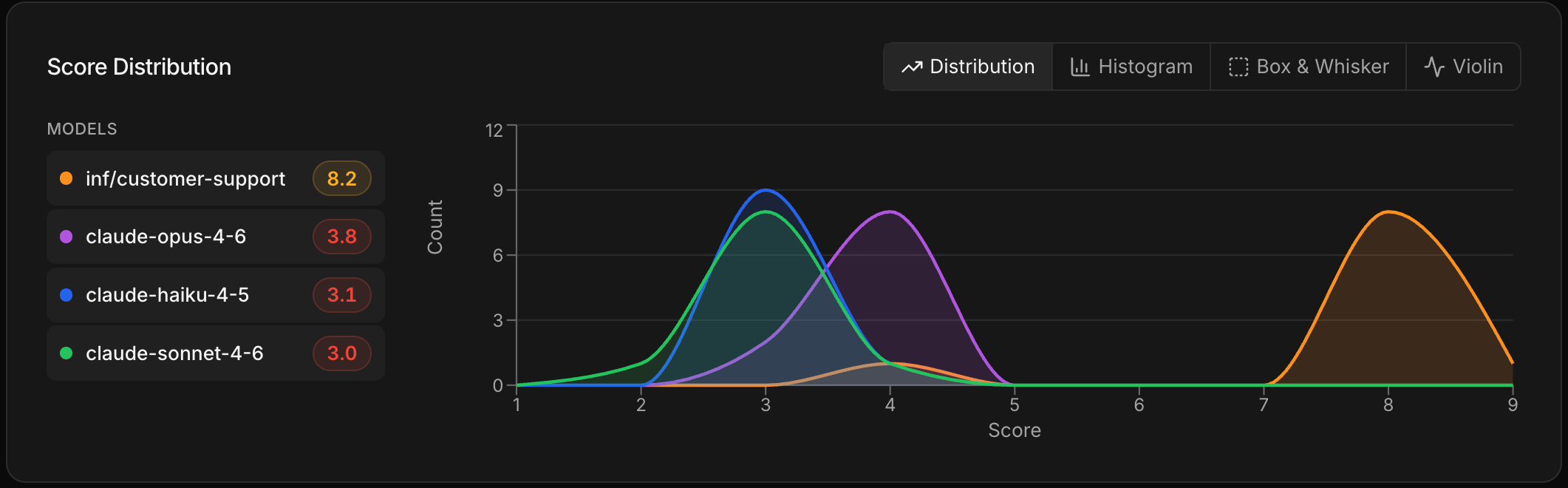

customer-support-eval dataset and the project rubric — the same ones used during training. This time, add your trained model alongside one or more off-the-shelf models.Compare the results

The comparison view shows how your trained model scores against the others on the same rubric. Since the model was trained specifically on this task, you should see it perform competitively — often matching or beating larger general-purpose models on quality, while being smaller and cheaper to run. If the results aren’t where you want them, refine your training data and retrain before deploying.

What you just learned

- Training teaches a model your specific task using your data, with the rubric guiding quality during the process

- Mid-training evals give you visibility into whether training is working before it finishes

- Deployment puts the trained model behind the same API you already use — no code changes beyond swapping the model name

- Post-training evals let you validate that the trained model actually outperforms alternatives on your criteria

Next steps

Choose a recipe

Understand recipe tiers and how to pick the right one for your task.

Launch a training run

The full training flow with your own data — cost and duration estimates included.

Call your deployment

Full production setup for calling your deployed model.

Monitor with Observe

Track your deployed model’s cost, latency, and quality over time.