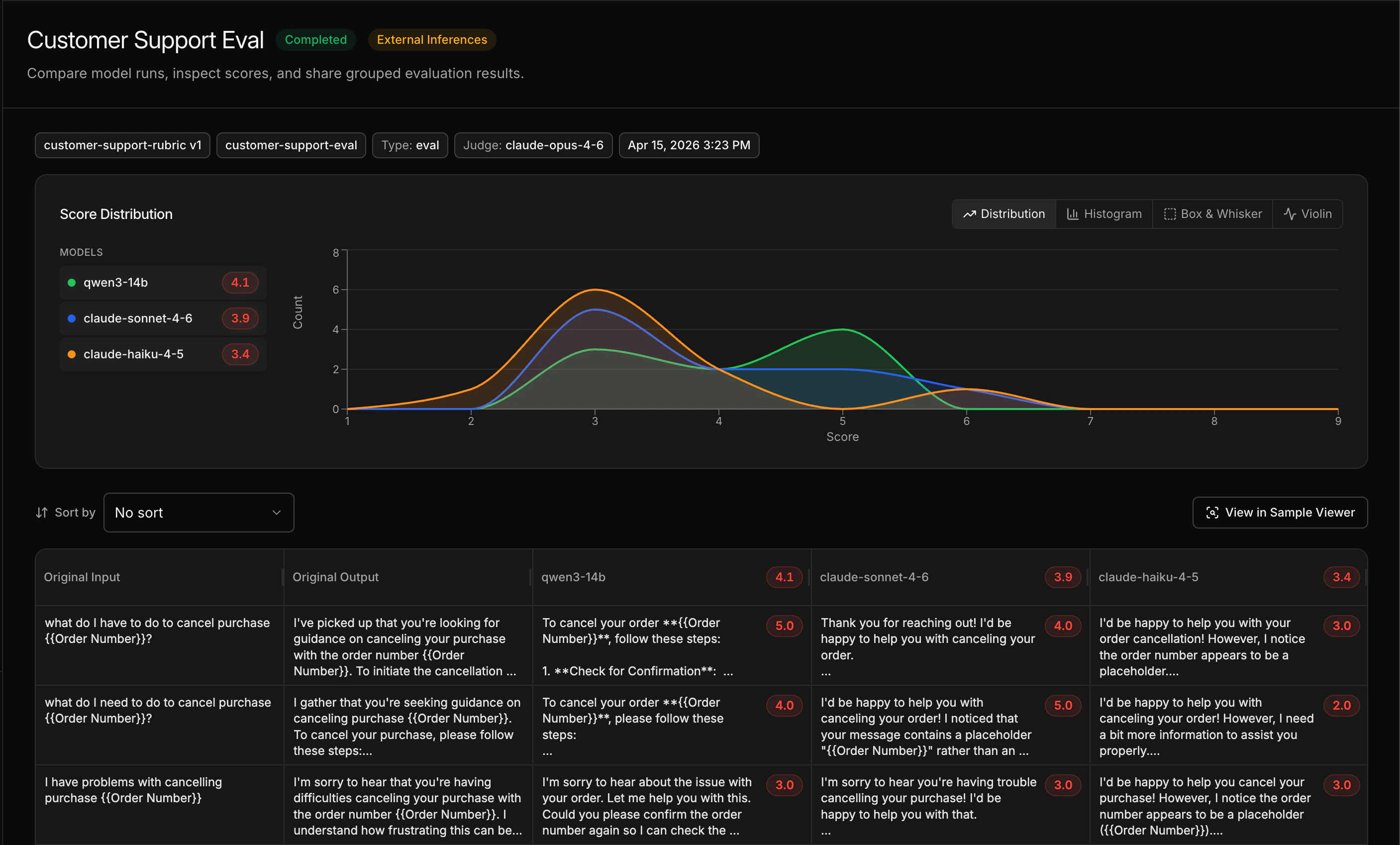

An eval measures which model is better for your task, and by how much. You define a rubric that describes what “good” looks like, run your data through candidate models, and let an LLM judge score the outputs. This is how you know whether a smaller, cheaper model can replace the one you’re using today. This guide uses the Customer Support Chatbot demo project, which comes pre-loaded with a dataset and rubric so you can run an eval immediately — no data required. Once you’ve seen how it works, you can apply the same process to your own data.Documentation Index

Fetch the complete documentation index at: https://docs.inference.net/llms.txt

Use this file to discover all available pages before exploring further.

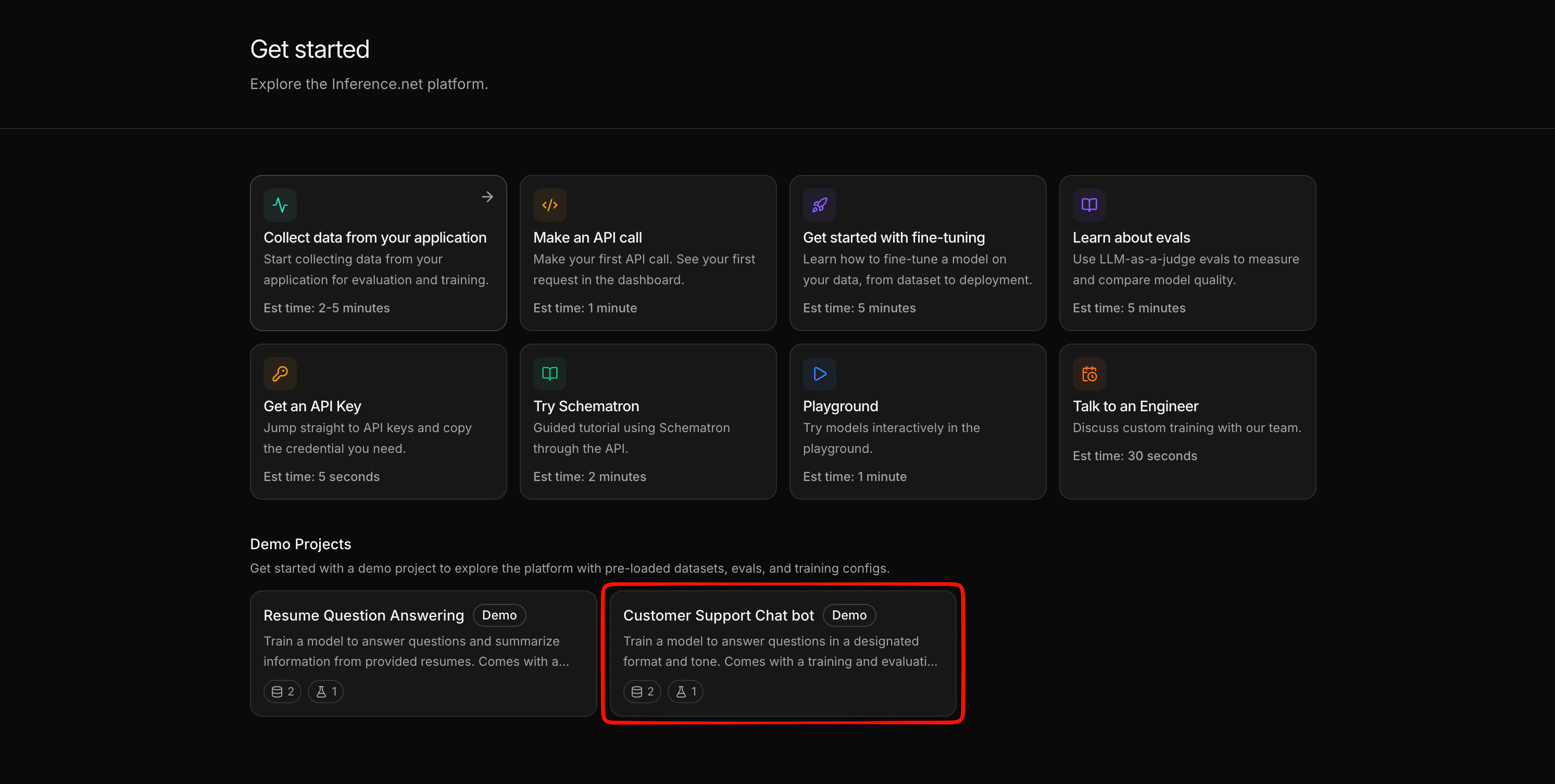

Start the demo project

If you haven’t already, create the demo project:- From the dashboard, navigate to the Learn page (or the Create a Project page).

- Find Customer Support Chatbot and click Start with demo project.

| Artifact | Name | Purpose |

|---|---|---|

| Eval dataset | customer-support-eval | Sample customer support conversations to evaluate against |

| Training dataset | customer-support-train | Used later for training a model |

| Rubric | Customer support rubric | Defines what a good customer support response looks like — tone, format, and accuracy criteria |

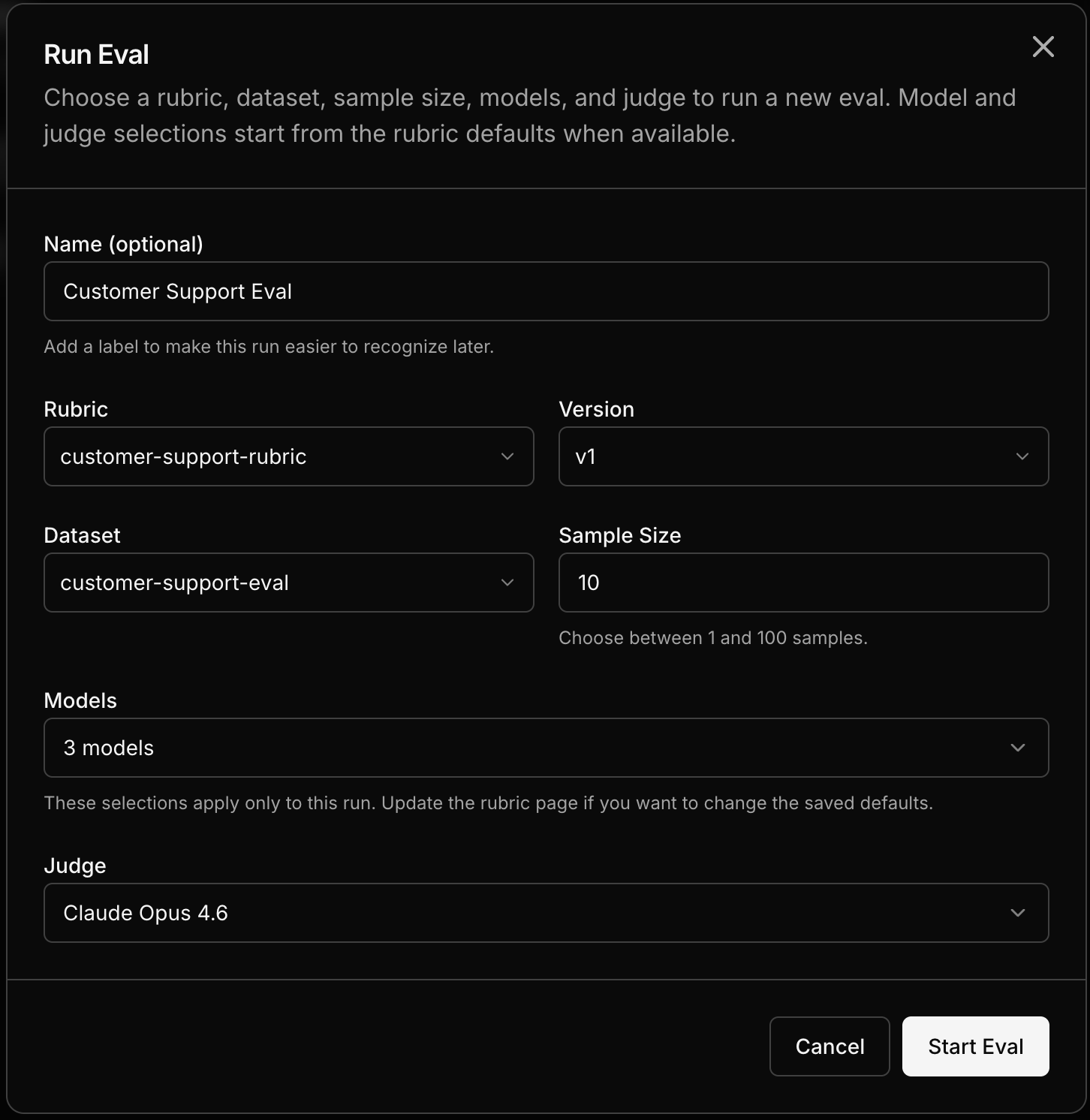

Run an eval

Navigate to Evals

Open your Customer Support Chatbot project and go to the Evals tab. Click New Eval.

Select the rubric and dataset

The demo project’s rubric and the

customer-support-eval dataset are already available in your project. Select them.

Pick models to compare

Choose two or more models to evaluate. You can pick any combination from the model catalog — OpenAI, Anthropic, open-source, or any other available model. For a quick comparison, try picking a large model and a smaller one to see how they stack up.

Run the eval

Click Run. Each sample from the dataset is sent to each model, and an LLM judge scores every response against the rubric.

What you just learned

- Rubrics define your quality bar in plain English — the LLM judge uses them to score outputs

- Evals run your data through multiple models and score the results, giving you a data-driven comparison

- You can re-run evals anytime — after changing the rubric, adding models, or later after training a custom model to see how it compares

Next steps

Train a custom model

Use the same demo project to train and deploy a model.

Write a rubric

Learn how to write your own rubrics for your specific use case.

Read the results

Deep dive on interpreting the comparison view.

Build a dataset

Create datasets from your own data — captured traffic or uploaded files.