Fine-tuning vs distillation

| Approach | Best for |

|---|---|

| Fine-tuning | Improving quality on a task where the base model is close but not good enough |

| Distillation | Preserving task quality while moving into a smaller, faster, cheaper student model |

The self-serve workflow

- Capture or import representative data in /observe/datasets-and-uploads.

- Define a baseline in /evaluate/overview.

- Launch a training run against paired training and eval datasets.

- Compare the resulting model against the baseline.

- Promote the winner into /deploy/overview.

What to optimize for

- Higher task accuracy on the prompts that matter to your product

- Lower cost by distilling into a smaller student model

- Lower latency for user-facing workflows

- Tighter output behavior for structured extraction, tagging, or classification workloads

Before you train

Do not skip the eval step. Training without a stable rubric and representative eval dataset makes it much harder to tell whether the new model is actually better.Next steps

Turn Eval Failures into a Training Run

Use a stable eval baseline and paired datasets to launch training the right way.

Create Datasets from Observed Traffic

Build the paired training and eval datasets required for self-serve training jobs.

Create datasets

Build the training and eval data you want to optimize against.

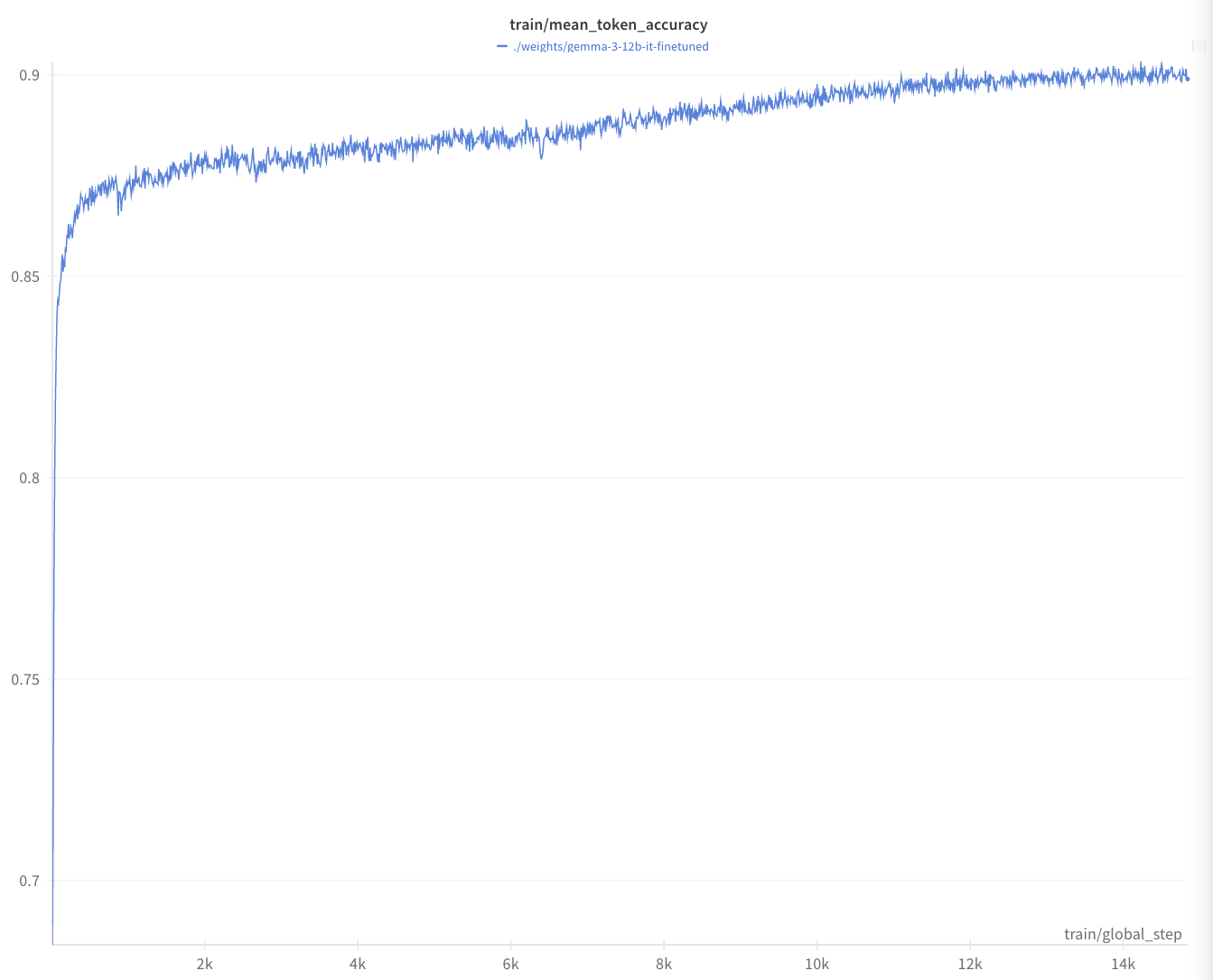

Launch and monitor training

Start a training job, inspect logs, and watch checkpoint evals.

Promote to deployment

Turn the completed training output into a production serving path.

Talk to an engineer

Meet with our team if you want help with dataset strategy, distillation, or rollout planning.